The Encryption Paradox

Encryption is the backbone of digital trust. Every web session, API call, and remote connection now travel through layers of Transport Layer Security (TLS), QUIC, or VPN tunnels. While this shields users from eavesdropping, it also blinds the very systems designed to protect them.

Modern Next Generation Firewalls (NGFWs), Secure Access solutions, and intrusion detection platforms must inspect encrypted traffic without disrupting performance or privacy. That balance demands something few organizations discuss openly: large scale simulation of the encrypted internet itself.

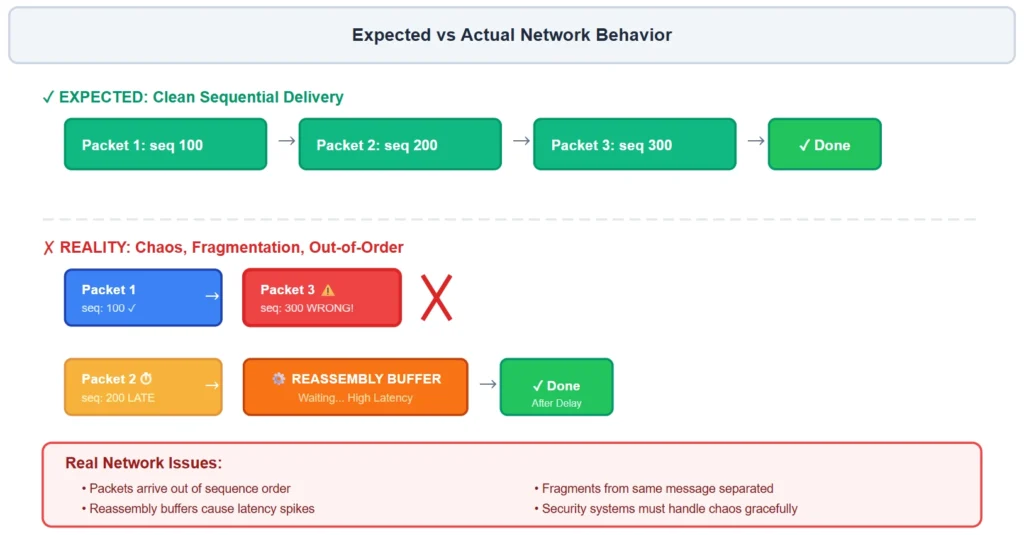

The Real Internet Isn't Clean

Most testing labs use predictable, perfectly formed TLS sessions. Real networks are far messier. In production, security devices see:

- Fragmented or out of order packets

- Jumbo TLS records and mixed cipher preferences

- Browser fingerprints that vary with every update

- API clients that reuse session tickets aggressively

- Certificate chains that change daily as CAs resign intermediates.

Each small deviation can expose timing, parsing, or policy handling flaws that static regression tests will never find.

To build resilient inspection systems, engineers now recreate that chaos on purpose, using automated frameworks that generate millions of encrypted flows, mutate handshakes, and inject noise into the protocol stream. The goal is not perfection; it’s realism.

Simulating the Modern Encryption Stack

A realistic simulation environment must span every layer of today’s encryption ecosystem:

Each simulated handshake is evaluated not only for decryption success but for policy correctness: did the system decrypt, bypass, or block according to rule hierarchy and certificate trust?

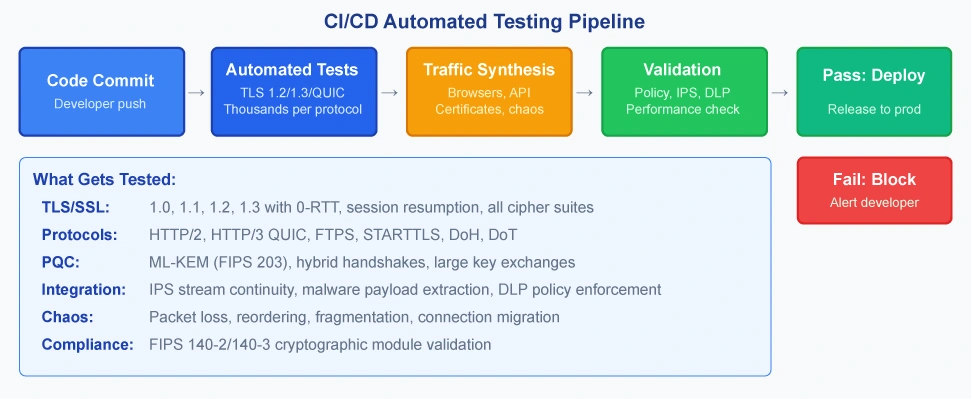

Automation at the Core

Manual testing cannot keep pace with encryption’s diversity. Continuous integration pipelines now generate and analyze encrypted traffic at scale:

- Traffic Synthesizers:Build browser, API, and IoT TLS fingerprints.

- Certificate Engines:Produce expired, pinned, or self-signed chains.

- Policy Validators:Confirm the right decrypt/bypass actions.

- Telemetry Collectors:Track latency, handshake counts, and CPU cost.

- FIPS Compliance Validators:Ensure cryptographic operations use FIPS 140-2/140-3 validated modules.

Continuous test runs produce extensive metrics, flagging regressions in latency or visibility long before software reaches production. With thousands of automated tests per protocol, this turns decryption testing from a one-time QA step into a continuous resilience program.

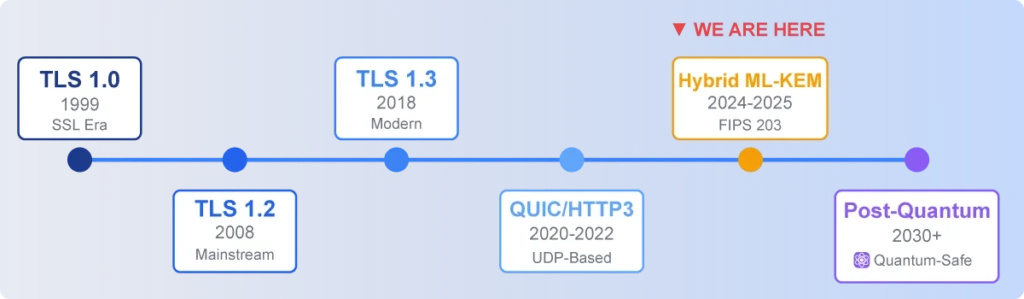

PQC: Preparing for the Quantum Era

The next frontier is Post Quantum Cryptography (PQC). Quantum computers could someday break classical public key algorithms like RSA and ECDSA. Browser vendors and cloud providers are already deploying hybrid TLS 1.3 handshakes that combine traditional elliptic curve keys with quantum safe algorithms such as ML-KEM (FIPS 203), the NIST standardized version of CRYSTALS-Kyber.

Testing these hybrids matters today. They change handshake sizes, timing, and fallback behavior. A firewall or proxy unprepared for PQC may misclassify sessions or fail open. Simulation frameworks now include PQC variants to ensure inspection pipelines remain compatible and performant as the internet transitions to quantum resistant cryptography.

FIPS Compliance: For government and regulated industries, all cryptographic operations must use FIPS 140-2 or FIPS 140-3 validated modules. Testing validates that ML-KEM implementations operate in FIPS-approved mode, key generation follows approved methods, and fallback to non-FIPS algorithms is prevented. This ensures compliance even as new post-quantum standards are introduced.

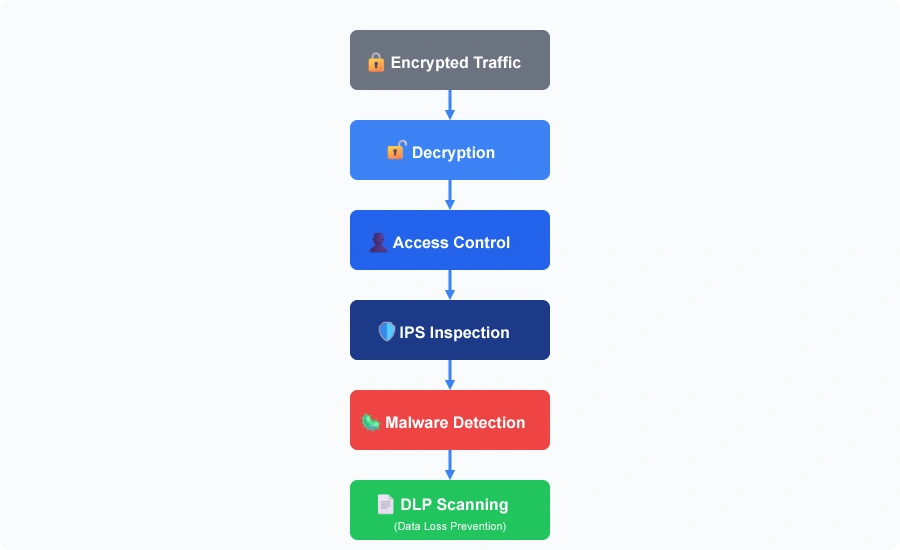

Integrating Policy and Threat Detection

Decryption simulation is not just about cryptography; it verifies the full security workflow:

- Access Control:ensuring decrypted identities map to correct policies.

- Intrusion Prevention:confirming stream continuity after decryption.

- Malware Inspection:validating that extracted payloads reach analysis engines intact.

- Privacy Compliance:enforcing “bypass” for financial or healthcare categories.

Each simulation run validates these interactions under stress, proving that visibility and compliance can coexist.

Lessons from Chaos

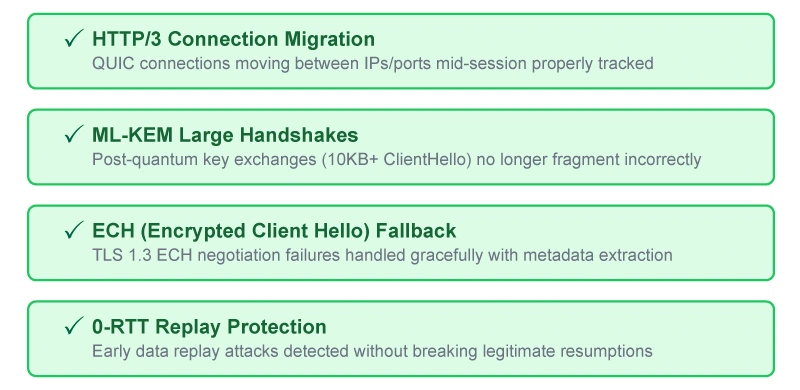

Years of simulation reveal patterns shared across the industry:

- HTTP/3 Connection Migration:QUIC connections moving between IPs/ports mid-session require proper connection tracking.

- ML-KEM Large Handshakes:Post-quantum key exchanges (10KB+ ClientHello) can fragment incorrectly across network boundaries.

- ECH (Encrypted Client Hello) Fallback:TLS 1.3 ECH negotiation failures must be handled gracefully with metadata extraction.

- 0-RTT Replay Protection:Early data replay attacks must be detected without breaking legitimate session resumptions

By reproducing such conditions in-house, organizations prevent outages and silent inspection failures later.

The Road Ahead

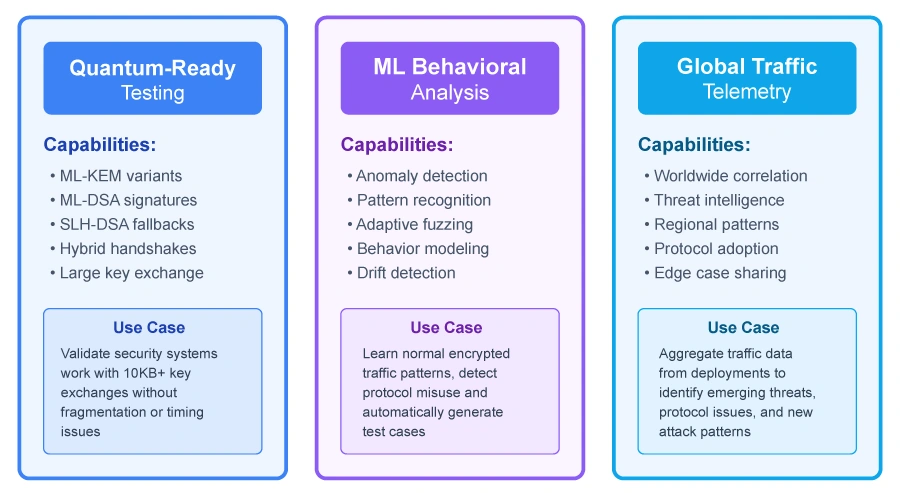

Encryption is evolving faster than static security models can adapt. The next generation of testing will depend on:

- Quantum Ready Testing:Validating security systems against ML-KEM, ML-DSA, and hybrid post-quantum handshakes to ensure compatibility with 10KB+ key exchanges,

ML Behavioral Analysis: Learning normal encrypted traffic patterns through anomaly detection, adaptive fuzzing, and behavior modeling to automatically generate test cases, and

Global Traffic Telemetry: Aggregating deployment of data worldwide to identify emerging threats, protocol issues, and regional attack patterns through shared intelligence.

Resilient security systems will emerge from this blend of quantum readiness, machine learning insights, and collaborative intelligence.

Conclusion

Building trust in a fully encrypted internet requires seeing through the noise safely, responsibly, and ahead of attackers. By simulating the unpredictability of real-world encrypted traffic and preparing for quantum era cryptography, cybersecurity engineers can ensure that visibility and protection evolve together.

In the end, resilience is not about decrypting everything. It’s about testing against everything the internet and the future can throw at us.

Tags: Cybersecurity, Network Security, NGFW, Post-Quantum Cryptography, PQC, Threat Detection, TLS